Escalations are not failures

... or how I learned to let things break on purpose

Nobody likes escalations. ( I feel that I start all my posts like this :D )

They feel like you're admitting defeat. Like you couldn’t handle the situation. Like you’re causing drama. Like you’re that person who always makes things bigger than they need to be.

So we avoid them. We try to solve problems quietly. We hope things will get better. We wait. And sometimes, waiting makes everything worse.

Here is one story about the time I let the system die. On purpose. Well, almost on purpose. It can be avoided if businesspeople listen to engineers more. But they won’t. And this is OK. Not for the business tho.

The bug that nobody cared about

Years ago, I worked with a Product Owner on a platform that was growing rapidly. Good problem to have, right?

Except we had this bug. Nasty one. It was causing memory leakage in our system. Not huge - maybe a small percentage of traffic was affected. But here’s the thing about percentages: when your traffic doubles, your leakage doubles too. Or even more.

I went to PO. “We need to stop and fix this. We need to invest in platform stability. If we don’t, this will blow up.”

The answer was predictable. “We have roadmap commitments. We can’t pause feature development. Let’s revisit next quarter.” And my favorite: “The leakage is small. We can consume it“.

I pushed back. Showed projections. Explained that leakage was growing. Painted the picture of what would happen if we continue.

“Noted. But we need to ship these features first.”

So I documented everything. Every warning. Every projection. Every request to prioritize stability work. Made sure there was a paper trail. Funny thing - in the world of email being spammed by millions of messages from monitoring systems, reports, and other stuff - email from a real person means: that is fucking serious!

And then I waited. What else can I do?

It took about six weeks.

System crashed. Not a small hiccup - full outage. Customers couldn’t access the platform. Money was lost. Panic everywhere. Tho I had a recovery plan, so we went back quickly. Hero moment, lol.

Senior leadership noticed. Obviously. There were investigations, war rooms, and lessons learned. And small group meetings. “How did this happen? Who is responsible? Why didn’t anyone see this coming?”

And that’s when my documentation became very useful. My “I told you so.“ package :)

“Actually, we did see this coming. Here are the warnings from six weeks ago. Here are the requests to prioritize stability work. Here are the projections that predicted exactly this scenario.”

The result? My team finally got approval to step back from feature work and build the stable platform I had been asking for. We got resources. We've got time. We got executive support. All because things had to break first. PO started listening to me more.

Escalations as a tool

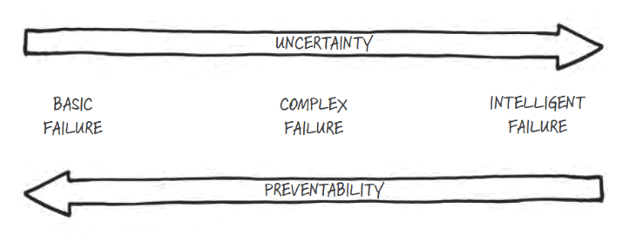

Here’s what I learned from my experience: sometimes you need to give someone chance to fail, so you can succeed in long run. In smart books it is called ”intelligent failure”

I’m not saying sabotage your projects. I’m not saying let things burn for fun. But sometimes, the only way to get attention for real problem is to let the problem become visible.

When you escalate early - when you warn about risks before they materialize - people often don’t listen. Risk feels abstract. There’s always something more urgent. Cost of prevention seems higher than cost of the problem that hasn’t happened yet.

But when you escalate at right moment - when problem is real and visible and painful - suddenly everyone cares. Suddenly resources appear. Suddenly your warnings are taken seriously.

The trick is being prepared for that moment.

When ignoring escalations leads to disaster

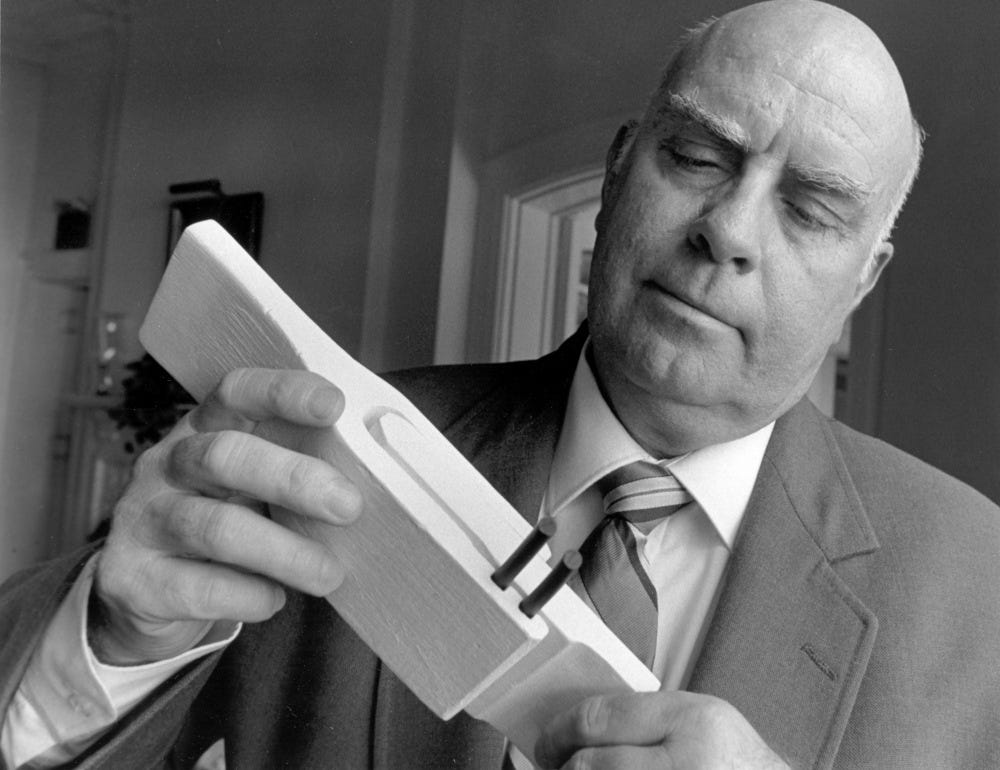

The Challenger disaster: when escalation fails

Here’s the darker side of this story.

One engineer on that task force, Roger Boisjoly, wrote a memo six months before the Challenger disaster that warned of “a catastrophe of the highest order — loss of human life” if the O-ring problem wasn’t fixed.

Six months. He warned them six months before.

The night before the launch, Boisjoly and his colleagues tried to stop the flight. Temperatures were due to fall to −1 °C (30 °F) overnight. Boisjoly felt this would severely compromise the O-ring's safety.

And what happened? The Morton Thiokol managers advised NASA that their data was inconclusive. NASA asked if there were objections. Hearing none, NASA decided to launch.

Seven people died.

The Challenger commission concluded it was “an accident rooted in history,” given the evidence of O-ring damage before the fatal launch and the failure to heed the warnings of the Thiokol engineers.

The lesson here is brutal. Escalation only works if someone is listening. And sometimes the cost of not listening is catastrophic.

How to make escalations work for you

If you’re going to use escalation as a tool, you need to do it right.

First, document everything. Every warning you give, put it in writing. Every risk you raise, send an email. Every time you ask for resources and get denied, make sure there’s a record.

This is not about covering your ass. Well, not only about that. It’s about creating timeline that shows you saw problem coming. When things eventually escalate, you want to be the person who warned everyone, not the person who let it happen.

Second, be specific about risks. “This might cause problems” is useless. “If we don’t fix this bug, we will lose approximately X amount per month, growing at Y rate, and we risk full outage within 8-12 weeks” is useful. Always protect your escalations with a data.

Third, propose solutions alongside problems. Don’t just say “this is broken.” Say “this is broken, here’s what we need to fix it, here’s how long it will take, here’s what we need to pause.”

When escalation moment comes, you want to have ready plan. Not “we need to figure something out” but “here’s exactly what we do next.”

Timing is everything

The hardest part of escalation is timing.

Too early, and nobody believes you. Problem isn’t visible yet. You look like you’re overreacting.

Too late, and you’re just part of the disaster. You didn’t warn anyone. You’re not the solution, you’re the problem.

Sweet spot is right before things break. When trajectory is clear. When evidence is mounting. When you can say “this will happen in two weeks” and be confident about it.

Sometimes you can’t time it perfectly. Sometimes you warn people and they don’t listen, and you have to let the failure happen. Shit happens. Black Swan comes.

That’s okay. As long as you documented your warnings, as long as you have plan ready, as long as you’re prepared to step in and fix things - the escalation becomes your moment to shine, not your moment of shame.

What to do after escalation works

So let’s say escalation happened. Problem became visible. Now everyone is listening.

This is not the moment to say “I told you so.” Trust me, it’s tempting. But it’s not useful. Be a bigger person.

This is the moment to fix things and build systems that prevent next escalation.

After our outage, we created regular stability review. Agreed on thresholds that would trigger automatic prioritization of technical work. Built process where technical risks get attention before they become emergencies.

Netflix did something similar after their 2008 database outage. They created Chaos Monkey - a program that randomly kills servers in production. Instead of waiting for failures, they started creating small controlled failures constantly. Knowing that this would happen frequently created a strong alignment among engineers to build redundancy and process automation to survive such incidents.

That’s the real lesson. Escalation gets you attention. What you do with that attention determines whether you’ll need to escalate again.

The escalation mindset

Stop thinking of escalations as failures. Start thinking of them as tools.

Sometimes only way to get resources for important work is to let unimportant work fail. Sometimes only way to change priorities is to let consequences of wrong priorities become visible. Sometimes only way to be heard is to let the silence have consequences.

This doesn’t mean being passive aggressive. Doesn’t mean hoping for failure. It means being strategic about when and how you raise issues.

Warn early. Document everything. Propose solutions. And if people don’t listen - be ready for the moment when they finally will.

From what I’ve learned in my career, escalations are not admissions of defeat. They’re tools for change.

Question is not whether to escalate. Question is when, and how, and whether you’re prepared to use the moment well.

Sometimes things need to break before they can be fixed properly. Your job is to make sure you’re ready when they do.